As far as my testing goes, Shrinking Window is finding better results than SMAC Optimizer, but it takes forever to run.

Would you be so kind as to tell me why you think that SMAC Optimizer is better?

Would you be so kind as to tell me why you think that SMAC Optimizer is better?

Rename

QUOTE:

why you think that SMAC Optimizer is better?

Let me respond with another question:

What is the goal of optimizing a trading strategy?

Many developers try to get the best possible performance [metric value] during optimization and totally forget that these results are for the data used during optimization only.

What really counts is the behavior of the [optimized] strategy on new, unseen, future data, in other words the Out-Of-Sample performance.

After all we want to trade the strategy in real time and make as much profit as possible.

This means, the optimizing effort should not find the "best solution" but a parameter combination which works Out-Of-Sample as good as possible.

How can we do this?

The simplest thing is to check the optimized trading system on unseen data:

Divide the available historical data in (at least) two intervals: Insample (for example 2010 to 2018) and Out-Of-Sample (for example 2019 to 2021).

Run all your optimizations on the Insample Interval only.

If finished run your "best solution" on the Out-Of-Sample interval.

(You'll be surprised. Don't be sad! Its normal...)

When I do this I use some performance metrics form the IS/OS Sorecard (available with the finantic.ScoreCard Extension) which runs the two parts mentioned above at the same time.

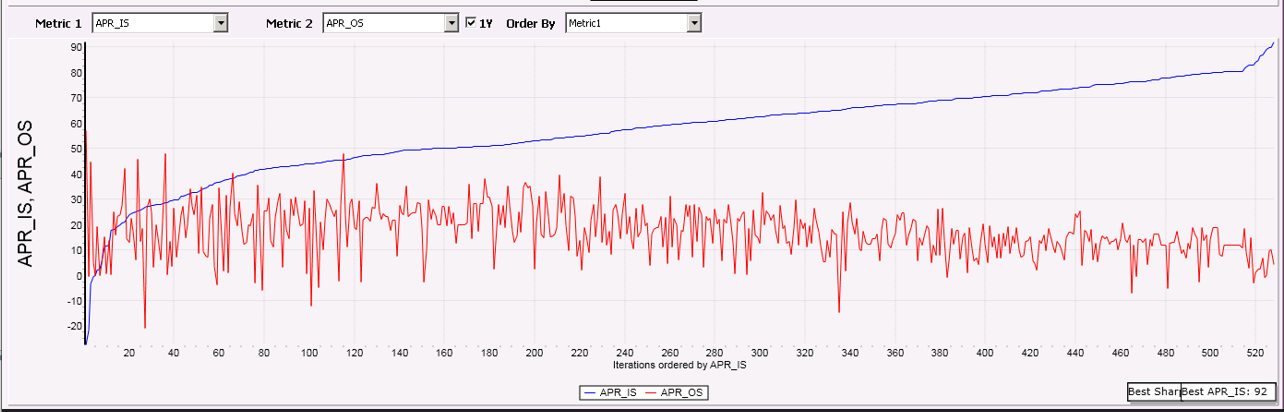

Furthermore this creates a graph where is possible to literally see where the overoptimization starts:

(This is SeventeenLiner and SMAC)

The blue line (Insample) shows the spectacular results form our optimization run. The red line (Out-of-Sample) counts.

Please note: The "best solution" which is shown by the blue line to the right is way in "overoptimized territory" i.e. the parameter combination behind these "best APR_IS values" is useless in real trading.

The graph also hints that it is not necessary to try more than 1000 or 2000 iterations.

I think the point is that "peak performance" is unsustainable over time. I think we can all agree with that. In this case, your solution vector space is hitting a local maxima with very narrow (and unsustainable) boundaries.

In a perfect world, the optimizer would pick a compromising maxima with wide and far reaching boundaries so as the market climate changed with time your model would still fall within that compromising maxima. The hard part is getting the optimizer to do that reliably. (In image processing, we smooth the intermediate solution prior to resampling, but I'm not so sure that would be reliable here.)

In a perfect world, the optimizer would pick a compromising maxima with wide and far reaching boundaries so as the market climate changed with time your model would still fall within that compromising maxima. The hard part is getting the optimizer to do that reliably. (In image processing, we smooth the intermediate solution prior to resampling, but I'm not so sure that would be reliable here.)

Your Response

Post

Edit Post

Login is required