Let's discuss DrKoch's new strategies here!

I'm working on integrating Strategies with the Discussion forum, and this is a test of the new integration.

I'm working on integrating Strategies with the Discussion forum, and this is a test of the new integration.

Rename

This is excellent. I just wanted to post two questions for DRK.

GREAT SYSTEM

What happens if you integrate TW?

The average percent profit per trade seems low. Hard to trade?

GREAT SYSTEM

What happens if you integrate TW?

The average percent profit per trade seems low. Hard to trade?

Test answered!

I'm all for applying robust statistical methods to trading strategies. That includes the use of percentiles, median (50% percentiles), and quartiles in strategies. Yes, and know software support for robust methods is spotty and books published on robust statistics are directed at first and second year graduate students in statistics to support further research. (No one has yet published a "layman's book" on robust statistics.)

The problem with classical statistics (which is based on moments) is that it's impossible to reliability estimate the higher ordered moments such as variance (i.e. the second moment). And that makes it unreliable for modeling the transient behavior we see in stock trading. We need more nonlinear, nonparametric methods to apply to the trading problem.

The problem with classical statistics (which is based on moments) is that it's impossible to reliability estimate the higher ordered moments such as variance (i.e. the second moment). And that makes it unreliable for modeling the transient behavior we see in stock trading. We need more nonlinear, nonparametric methods to apply to the trading problem.

Well, these limit entry systems have a long tradition with wealth-lab rankings.

They are like racing cars built to the specs.

That means this kind of systems show spectacular results in end-of-day backtests but are hard to trade in real life.

First there are a lot of limit orders every day. You need to find a broker which accepts such a huge amount of limit orders.

Second there is a builtin restriction on the number of open positions. With 15% position size and 1.1:1 margin there are never more than 110/15 = 7 open positions at the same time. In real time trading you'll have to make sure that all outstanding orders are cancelled as soon as there are 7 positions open.

Third there are some inaccuracies when a limit entry system is backtested on EOD data. In an backtest on daily data it is impossible to tell at what time a limit order is filled. So the sequence of fills in a backtest is certainly different from this sequence in real trading. This may change results also.

Conclusion: Think twice before considering such a system for real trading.

They are like racing cars built to the specs.

QUOTE:

Hard to trade?

That means this kind of systems show spectacular results in end-of-day backtests but are hard to trade in real life.

First there are a lot of limit orders every day. You need to find a broker which accepts such a huge amount of limit orders.

Second there is a builtin restriction on the number of open positions. With 15% position size and 1.1:1 margin there are never more than 110/15 = 7 open positions at the same time. In real time trading you'll have to make sure that all outstanding orders are cancelled as soon as there are 7 positions open.

Third there are some inaccuracies when a limit entry system is backtested on EOD data. In an backtest on daily data it is impossible to tell at what time a limit order is filled. So the sequence of fills in a backtest is certainly different from this sequence in real trading. This may change results also.

Conclusion: Think twice before considering such a system for real trading.

QUOTE:

I'm all for applying robust statistical methods to trading strategies.

Yes, this strategy is first of all a demonstration of the all-new "Moving Percentile" Indicator which is able to measure/estimate median values (percent=50) quartiles (percent=25, percent = 75), interquartile ranges (difference of the latter two) and all other percentiles over a selectable lookback period.

This makes the result robust in the presence of outliers (which we always find in financial time series).

MP ( = Moving Percentile) is part of the finantic.Indicators extension.

Nice strategy! I really like simple ones like this: with just a bit tweaking on top and you have something tradable in real-life.

Just one question regarding the rankings: how does it cope with systems that generate more orders than the capital would in practice allow? Without specifying a weight, each run should produce substantially different results over a 10y period, since in retracement days, with a big enough dataset, it can produce dozens of trades, many probably missed for which the capital is not enough - and I’m guessing that (without considering more granular quotes) the order will be just random.

Just one question regarding the rankings: how does it cope with systems that generate more orders than the capital would in practice allow? Without specifying a weight, each run should produce substantially different results over a 10y period, since in retracement days, with a big enough dataset, it can produce dozens of trades, many probably missed for which the capital is not enough - and I’m guessing that (without considering more granular quotes) the order will be just random.

Yes, it is random unless the Strategy author uses a Transaction Weight.

Don't forget, you can always use the WL8 Quotes tool to make trading a system like this viable!

Sure, it is then possible to trade; but it removes the deterministic (If I can call it like this) aspect of the back-testing.

I prefer the realism introduced by using a weight; like the other DrKoch's published strategy: OneNight w Moving Percentile and Weight (which seems to also work pretty well).

I prefer the realism introduced by using a weight; like the other DrKoch's published strategy: OneNight w Moving Percentile and Weight (which seems to also work pretty well).

By the way: back at my main computer (with WL8) I was just trying to run this strategy and it seems that this indicator is not part of the current build 1 of Finantic.Indicators. Is there an update that was not yet published?

QUOTE:

Is there an update that was not yet published

Yes. MP (Moving Percentile) comes with build 2 of finantic.Indicators which is on the way...

Build 2 will be released soon, hopefully today.

finantic Indicators Build 2 is now available.

I barely had time to ask about it… ;-)

Thank you!

Thank you!

QUOTE:

realism introduced by using a weight

The weight makes the back-test deterministic, you'll get the same results with every run.

BUT: The weight makes the back-test not more realistic.

Basically it is impossible to tell which limit orders will be filled if the back-test is run on EOD data. Intraday data is needed to make such a back-test realistic.

If you limit the number of entry signals using this option combined with Weight, it is more realistic and deterministic:

QUOTE:

If you limit the number of entry signals using this option combined with Weight, it is more realistic and deterministic:

Indeed, that's what I meant: the combination of setting a weight and then limiting the entry signals (as far as I can tell, the order is set by the weight; to be honest I mostly trade futures and only recently restarted looking at stock trading, after a long hiatus) results in a deterministic and realistic backtesting.

QUOTE:

it is random unless the Strategy author uses a Transaction Weight.

The combination of

1.) limit order entry

2.) transaction weight

3.) restricted number of positions

is now considered "peeking". So the variation with weight (" OneNight w Moving Percentile and Weight") was removed from published strategies.

I created a version with a ranking mechanism (https://www.wealth-lab.com/Strategy/DesignPublished?strategyID=50) which looks not as profitable as its sisters, but it is much more deterministc, realistic and tradeable.

There was a request to describe better what this strategy is doing. Let me try:

This is an EOD system (no orders submitted while exchanges are open).

It has a very short hold time, in fact the shortest imaginable hold time for a non-trivial EOD system: Whenever an entry-limit order is filled we hold the position for the rest of the trading day and one night. (Hence the name of the Strategy).

Positions are closed the next morning, when exchanges open, with a market order.

This means there are no fancy exit rules, just the simplest possible: Exit Market on Open the next day.

All the magic comes from the entry. This is a limit order and the critical piece is the entry-level-price.

The optimal limit price is different every day and for every stock. It depends on recent characteristics of the market and recent characteristics of the stock. (This makes things interesting).

The main ingredient is volatility - short term volatility to be precise. One of the most precise measurements of extreme short term volatility comes from the TrueRange indicator (TR).

In order to find the best volatility estimate for the current day and the current stock we proceed as follows:

Observe all the TrueRange values of the last 100 days. Sort all the values into two baskets: Basket one contains the 25 highest values, basket two contains the 75 lowest values. Then you take the lowest value from basket one.

(Yes, the lowest value of the 25 highest values)

This value also comes with the name "First Quartile" (https://en.wikipedia.org/wiki/Quantile) or as "75-Percentile".

The calculation belongs to the class of "robust statistics" (https://en.wikipedia.org/wiki/Robust_statistics) and has the tremendous advantage, that it does not get confused by outliers/extreme values which occur all too often in financial time series.

Back to our trading system: We use the 75-Percentile of the last 100 days of the TR indicator, multiply it by a scaling factor (which depends on portfolio size and other portfolio characteristics). This is our most probable walking distance for the price movement of the next day.

Consequently we place the limit order exactly this distance below the last close price - voilà.

What I forgot to mention: We repeat the calculation above each day, which means that there is a sliding 100 day data window. The Percentile/Quartile is calculated every day for the then-current sliding window. Thus the name "Moving Percentile".

Remark: The MovingPercentile (MP) indicator is available with the finantic.Indicators extension.

This is an EOD system (no orders submitted while exchanges are open).

It has a very short hold time, in fact the shortest imaginable hold time for a non-trivial EOD system: Whenever an entry-limit order is filled we hold the position for the rest of the trading day and one night. (Hence the name of the Strategy).

Positions are closed the next morning, when exchanges open, with a market order.

This means there are no fancy exit rules, just the simplest possible: Exit Market on Open the next day.

All the magic comes from the entry. This is a limit order and the critical piece is the entry-level-price.

The optimal limit price is different every day and for every stock. It depends on recent characteristics of the market and recent characteristics of the stock. (This makes things interesting).

The main ingredient is volatility - short term volatility to be precise. One of the most precise measurements of extreme short term volatility comes from the TrueRange indicator (TR).

In order to find the best volatility estimate for the current day and the current stock we proceed as follows:

Observe all the TrueRange values of the last 100 days. Sort all the values into two baskets: Basket one contains the 25 highest values, basket two contains the 75 lowest values. Then you take the lowest value from basket one.

(Yes, the lowest value of the 25 highest values)

This value also comes with the name "First Quartile" (https://en.wikipedia.org/wiki/Quantile) or as "75-Percentile".

The calculation belongs to the class of "robust statistics" (https://en.wikipedia.org/wiki/Robust_statistics) and has the tremendous advantage, that it does not get confused by outliers/extreme values which occur all too often in financial time series.

Back to our trading system: We use the 75-Percentile of the last 100 days of the TR indicator, multiply it by a scaling factor (which depends on portfolio size and other portfolio characteristics). This is our most probable walking distance for the price movement of the next day.

Consequently we place the limit order exactly this distance below the last close price - voilà.

What I forgot to mention: We repeat the calculation above each day, which means that there is a sliding 100 day data window. The Percentile/Quartile is calculated every day for the then-current sliding window. Thus the name "Moving Percentile".

Remark: The MovingPercentile (MP) indicator is available with the finantic.Indicators extension.

Thanks for the detailed explanation. So, i read the post(s) before too. Now, ...

Making a selection a priori which result in more profitable/improved metrics is fine.

Doing a selection a priori because brokers only allow to place a certain number of limit orders is wrong. The solution is to use the QT tool. In a more general sense to park limit orders on the client side. When a limit order is triggered it will be placed with high chance to be filled. The market decides which limit orders will be filled. The a priori selection to 7,10 or whatever number simply reduces the strategy performance. You can measure it easily. Do not introduce a further selection criteria just to fit the brokers limitation! (i am telling this for a year now)

To overcome granular restrictions for a simple EOD DIP strategy it can be implemented on intraday level of course. This has some other advantages like some kind of data validation. Of couse any other default techniques like trailing stops, SL+TP levels and different analysis can be performed too.

Currently the intraday implementation and the usage of the QT abilites does not come together. (i am telling this for a year now)

QT-Tool

Advantage: "Parks" limit orders until they are triggered

Advantage: Follows the price from market opening

Disadvantage: only EOD, intraday activities not possible

SM - Intraday

Advantage: price movements can be evaluated

Disadvantage: "Parking" (trigger) limit order is not possible

Disadvantage: placing order at market opening is a handicap if the price is not known before the session. (like sessionOpening).

If you start further analysing of these kind of DIP strategies you will realize that many trades are made within the first seconds and minutes of the day. E.g. the performance may drop from 150% to 25% (arbitrary numbers), only because of the volatility in the first minutes.

To trade this kind of strategy properly the requirments can be

1. LimitOrderWithTrigger(...) with usage in the SM

2. A workaround for the market opening in the SM.

Having these tools at hand each EOD DIP strategy can be transformed into an intraday strategy easily. But most important the broker limitation for limit orders could be handled with the SM. All the EOD limitations would be solved.

Just my 2 cents

Making a selection a priori which result in more profitable/improved metrics is fine.

Doing a selection a priori because brokers only allow to place a certain number of limit orders is wrong. The solution is to use the QT tool. In a more general sense to park limit orders on the client side. When a limit order is triggered it will be placed with high chance to be filled. The market decides which limit orders will be filled. The a priori selection to 7,10 or whatever number simply reduces the strategy performance. You can measure it easily. Do not introduce a further selection criteria just to fit the brokers limitation! (i am telling this for a year now)

To overcome granular restrictions for a simple EOD DIP strategy it can be implemented on intraday level of course. This has some other advantages like some kind of data validation. Of couse any other default techniques like trailing stops, SL+TP levels and different analysis can be performed too.

Currently the intraday implementation and the usage of the QT abilites does not come together. (i am telling this for a year now)

QT-Tool

Advantage: "Parks" limit orders until they are triggered

Advantage: Follows the price from market opening

Disadvantage: only EOD, intraday activities not possible

SM - Intraday

Advantage: price movements can be evaluated

Disadvantage: "Parking" (trigger) limit order is not possible

Disadvantage: placing order at market opening is a handicap if the price is not known before the session. (like sessionOpening).

If you start further analysing of these kind of DIP strategies you will realize that many trades are made within the first seconds and minutes of the day. E.g. the performance may drop from 150% to 25% (arbitrary numbers), only because of the volatility in the first minutes.

To trade this kind of strategy properly the requirments can be

1. LimitOrderWithTrigger(...) with usage in the SM

2. A workaround for the market opening in the SM.

Having these tools at hand each EOD DIP strategy can be transformed into an intraday strategy easily. But most important the broker limitation for limit orders could be handled with the SM. All the EOD limitations would be solved.

Just my 2 cents

Just another note.

An implementation at intraday level does not necessarily change that it is an EOD strategy. By this I mean that the implementation allows, for example, SL and TP orders to be backtested. In use, it can remain that the order is placed as before, but with two side orders (SL+TP). The consideration at intraday level can only be used for the analysis and adjustment of the EOD strategy, or it can even be semantically identical.

An implementation at intraday level does not necessarily change that it is an EOD strategy. By this I mean that the implementation allows, for example, SL and TP orders to be backtested. In use, it can remain that the order is placed as before, but with two side orders (SL+TP). The consideration at intraday level can only be used for the analysis and adjustment of the EOD strategy, or it can even be semantically identical.

Probably, when testing such strategies, intraday data is indispensable.

For example, we test the strategy for the last six months on the daily timeframe:

We get a yield of 20-30% and a smooth growing equity.

But as soon as we connect intraday data, the picture changes.

The strategy from profitable becomes unprofitable with a negative result.

And even using Monte Carlo on a daily timeframe (without intraday data) does not predict the result that is obtained using intraday data.

For example, we test the strategy for the last six months on the daily timeframe:

We get a yield of 20-30% and a smooth growing equity.

But as soon as we connect intraday data, the picture changes.

The strategy from profitable becomes unprofitable with a negative result.

And even using Monte Carlo on a daily timeframe (without intraday data) does not predict the result that is obtained using intraday data.

Which Monte Carlo method did you use? And how many runs?

You'd think that would have got it, but anything's possible. It looks like the bad result depends on some event near the end of 2022 - specifically a position (or a group of them) being held around the end of October. Otherwise the "up and downs" are pretty similar.

QUOTE:

Otherwise the "up and downs" are pretty similar.

You could use CompareTool (available with the finantic.ScoreCard extension see https://www.wealth-lab.com/extension/detail/finantic.ScoreCard#screenshots, section Compare Tool)

to show a same-graph comparison of the two equity curves. This would make it easier to find out where the differences come from...

Would the operation of the MP be somewhat like this?

CODE:

/* This code optimizes performance using a sliding window and binary search. Initially, the list is sorted once. Subsequent updates maintain order by precisely inserting new elements and removing old ones, avoiding full resorts. This efficient approach is ideal for large datasets and real-time processing. */ var window = new List<double>(_lookback); for (int i = 0; i < source.Count; i++) { if (i < _lookback - 1) { Values[i] = double.NaN; // Not enough data available yet continue; } if (i == _lookback - 1) { // Fill the window for the first time when we have enough data for (int j = i - _lookback + 1; j <= i; j++) { window.Add(source[j]); } window.Sort(); // Sort the initial window } else { // Find and remove the oldest element window.Remove(source[i - _lookback]); // Insert the new element while maintaining the window sorted double newElement = source[i]; int index = window.BinarySearch(newElement); if (index < 0) index = ~index; // If the element is not found, BinarySearch returns the bitwise complement of the insertion index window.Insert(index, newElement); } // Calculate the index for the desired percentile int n = (int)((_percentile / 100.0) * (_lookback - 1)); Values[i] = window[n]; }

@DrKoch - I just now decided to search for "OneNight" and found your comments above. Ha! You were way ahead of me.

@Glitch - the linked strategy idea in the post header is great. I couldn't find a feature request for this but if one exist, please let me know. I'd love a way to separate discussions on specific strategies from general WL questions.

We already have linked strategy names in the post header, what are you requesting??

Oh, sorry. I didn't realize that. How do we get this strategy linked in the header?

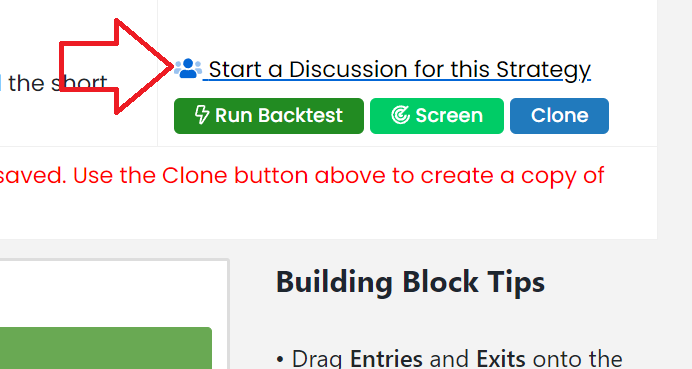

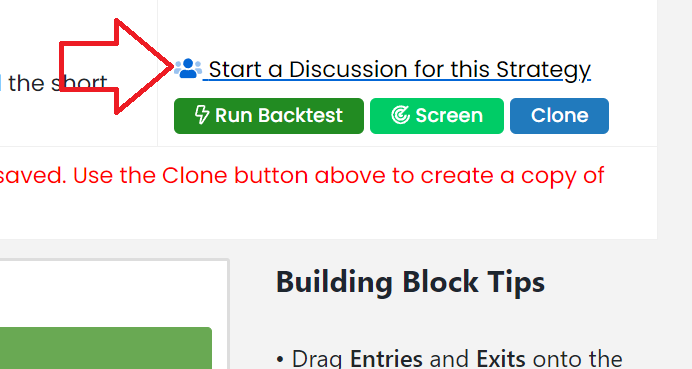

Go to a Published Strategy, click on it, and then this -

(if there's already a discussion, it will read "Join the Discussion...")

(if there's already a discussion, it will read "Join the Discussion...")

Your Response

Post

Edit Post

Login is required